Fully Autonomous AI SDRs Are Failing in 2026: The Data Behind the Industry Reset

TL;DR: The “AI fully replaces your SDR team” pitch has crashed in 2026. Common Room, Salesforge, and the operators behind AiSDR/Artisan/11x deployments quietly reverted to hybrid human+AI workflows. The companies still winning with AI SDR are the ones who own their stack, keep a human in the loop, and treat the AI as research and drafting muscle, not as a replacement for judgment. Here is the data, the four failure modes, and what a working setup actually looks like.

In 2024, the pitch was irresistible. Plug in your ICP, drop a credit card, and a fully autonomous AI SDR would research, write, send, qualify, and book demos for you. No salary, no ramp time, no sick days. The whole BDR function would dissolve into a $1,000/month software bill.

Eighteen months later, the receipts are in. They are not flattering.

The 2026 reset: the data nobody wants on the homepage

Three independent signals tell the same story.

1. Common Room published a piece in February titled “The AI SDR is dead, long live the AI SDR.” They did not bury the lede. The fully autonomous model, the one Artisan and 11x sold hardest, did not deliver pipeline at scale. What worked was agentic pipeline generation with humans driving.

2. The “we tried 10 AI SDR tools” review cycle. Coldreach, Salesforge, SignalFire, Salesmotion all ran their own teardowns of AiSDR, Artisan, 11x, Jazon, and the rest. The verdicts converged: research and drafting are useful, fully closed-loop sending without supervision is not.

3. The companies that bought in are quietly rolling back. Multiple post-mortems from operators who deployed Artisan or 11x as full SDR replacements describe the same pattern: meeting volume looks fine for 60 days, then deliverability collapses, reply quality drops, and the team reverts to a hybrid setup or back to human SDRs entirely.

This is not “AI does not work for outbound.” AI works extremely well for outbound. The specific bet that broke was: “let the AI run the entire loop without human judgment in it.”

Why the autonomous model failed: 4 failure modes

1. Personalization at scale is a math problem the AI lost

The pitch was hyper-personalization on every email. The reality is that LLMs personalize about as deeply as the inputs you feed them. A SaaS AI SDR has access to whatever public signals it scrapes (LinkedIn title, company size, recent funding). That is the same surface area a half-decent human SDR has. The personalization layer ends up being a slightly better mail-merge.

When every AI SDR vendor uses the same foundation models on the same public data, the outputs converge. By Q4 2025, prospects were getting three near-identical “I noticed your team is hiring” emails per week from three different vendors. The novelty premium evaporated.

2. Reply rates collapsed because the inbox got AI-aware

Buyers in 2026 have a tuned filter. They can spot AI outreach in two seconds: the suspiciously perfect grammar, the “I was researching companies in your space” opener, the sentence that pivots from compliment to pitch in one breath. Once a prospect tags an email as AI, reply rate goes near zero regardless of the offer.

The autonomous SDRs are the loudest source of this signal. They send the most volume, with the least variation, and they do not stop when a sequence is clearly burning the domain.

3. Deliverability fell off a cliff

The autonomous tools optimize for volume. Volume burns sender reputation. Most teams who deployed Artisan or 11x at scale watched their domain reputation collapse inside 90 days. Mailbox providers (Google, Microsoft) tightened spam thresholds in late 2025 specifically targeting the patterns these tools generate: high-volume, low-engagement, templated structure with rotating proxies.

A human SDR sending 50 personalized emails per day from a warm domain still gets to the inbox. An autonomous AI sending 500 per day from a fresh domain ends up in the spam folder by week 6. The autonomous tools sell the volume but cannot maintain the deliverability to make that volume worth anything.

4. The judgment layer cannot be outsourced

Outbound has soft signals: this prospect just got promoted, this company just lost its biggest customer, this lead replied with “not now” but the next person we should reach is their VP. A human SDR catches these. The fully autonomous AI does not. It pattern-matches on what looks like a buying signal and ignores the rest.

The vendors who tried to bolt judgment on top (with “AI agents” reading replies and routing them) ended up shipping janky logic that misclassifies more than half the time. The cost of a bad classification is a burned relationship, which the dashboard does not show.

What the survivors did: human-in-the-loop, custom-built

The teams still hitting their numbers with AI in their outbound stack share a clear pattern.

They use AI for the parts where AI is genuinely better than humans:

- Prospect research at scale (reading 50 LinkedIn profiles, 50 company sites, 50 recent news mentions, in seconds)

- Drafting first-pass email copy from that research

- Multi-touch scheduling and follow-up timing

- Reading replies to classify sentiment and intent

- Pushing data into the CRM cleanly

They keep humans in for the parts where judgment matters:

- Approving (or editing) the drafted email before send

- Calling out the prospect that the AI flagged as cold but the human knows is hot

- Handling “let me think about it” replies (where empathy and timing beat templates)

- Deciding when to break the sequence

This is not “humans doing the same job slower with AI as a sidekick.” It is a different role. A single SDR with a well-built AI layer covers the prospecting research and drafting that used to take a 5-person team, and spends their time on the 20% of conversations that close deals.

Why custom-built AI SDRs win this new model

Here is where the SaaS pitch falls apart hardest. SaaS AI SDR platforms were architected for the autonomous fantasy: end-to-end, hands-off, no escape valves. They are bad at the human-in-the-loop pattern because:

- Their “approve before send” UX is bolted on if it exists at all

- Their CRM integrations are shallow (read/write a few standard fields, not your custom playbook)

- Their data is on their servers, so you cannot run your own AI/ML on top of it

- Their pricing is per-seat or per-volume, so adding a human reviewer costs more, not less

- Their “judgment layer” is a black box you cannot tune

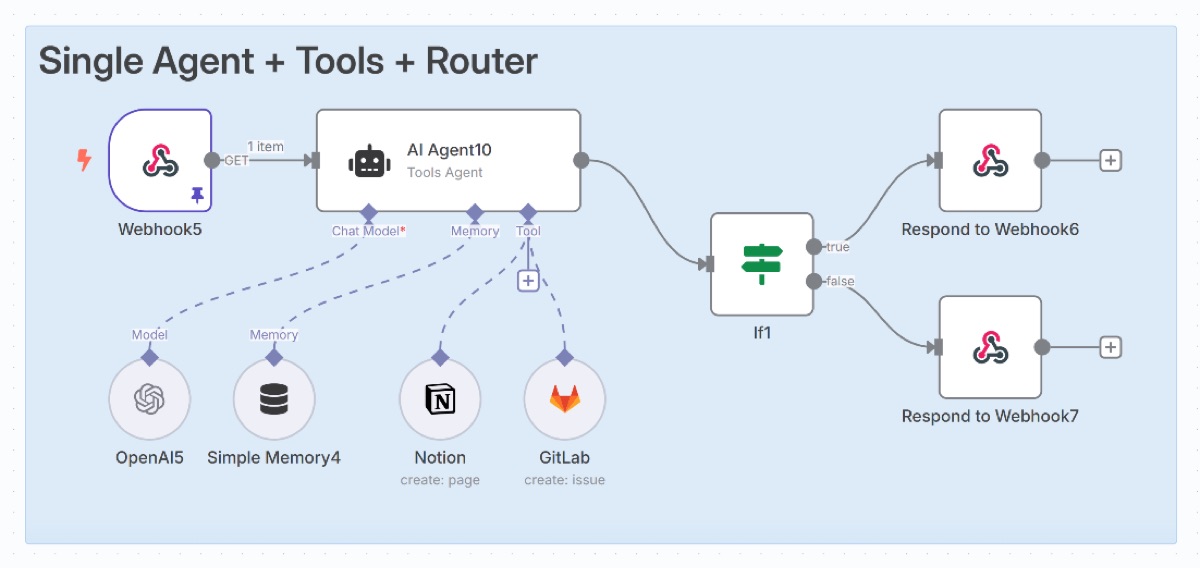

A custom-built AI SDR, deployed on your infrastructure with your CRM and your sequences, lets you wire the human review where you need it. You can have the AI draft, queue everything in Slack or your inbox for one-click approve, send, and move on. You can route ambiguous replies to a human while auto-handling clear ones. You can rebuild the prompts when your messaging changes, in an afternoon, without filing a feature request.

That is the architecture that wins in 2026.

What a working AI SDR setup looks like in 2026

If you are deciding what to build (or what to replace), four things matter more than vendor selection.

1. Own your prompts and your data. The biggest mistake in 2024-2025 was paying SaaS vendors to learn from your data. When you switch tools, that learning leaves with them. Custom-built means your prompts, your sequences, your enrichment logic, your reply classification, all stay yours.

2. Keep the human review step, even if it is fast. A 10-second human glance at each email before send is enough to catch the obvious AI tells, the wrong context, the burning bridges. That review step is what kept reply rates above 5% in 2026 deployments.

3. Build for low volume, high signal. The vendors optimized for volume because their pricing rewarded it. The actual game in 2026 is fewer emails, better targeted, with a sender domain that has earned its reputation. Aim for 50-150 well-researched touches per SDR per day, not 500 templated ones.

4. Wire it to your CRM, not their CRM. SaaS AI SDRs come with a parallel system of record you have to reconcile. Custom builds write directly to HubSpot/Salesforce/Pipedrive/Attio, with the field mapping and stage logic you actually use. No reconciliation, no double entry, no broken handoff.

The bottom line

Fully autonomous AI SDRs are a 2024 idea that did not survive the 2026 inbox. The companies still hitting their numbers with AI in outbound run a hybrid stack with humans on the judgment calls and AI on the research and drafting muscle. SaaS tools were built for the autonomous fantasy and are awkward in the new model. Custom builds, deployed on your infrastructure, with your prompts, your CRM, and a review step you control, are where the leverage is now.

If you are still paying $900-5,000 per month for an autonomous AI SDR and your reply rates are dropping, that is the signal. The model itself is the issue, not the vendor.

Get a Free AI Audit and we will scope what a custom-built AI SDR looks like for your team in 72 hours.

Related reading

- How to Build Your Own AI SDR Agent in 72 Hours: the technical step-by-step build guide with prompts and stack details.

- Best AI SDR Tools 2026: 7 SaaS Platforms vs Custom Build: honest comparison across AiSDR, Artisan, 11x, Lyzr, and the custom build alternative.

- Custom AI SDR Agents service: pricing, scope, and what the 72-hour build covers.