How to Build Your Own AI SDR Agent in 72 Hours: The Complete 2026 Guide

TL;DR: A custom AI SDR agent takes 72 hours to ship if you scope it correctly. The stack: Claude for research and drafting, LangChain or a thin orchestrator for the loop, n8n for CRM and email plumbing, and your own warmed sender domains. Total cost: roughly $2,500 of build time and $15-50/month in API usage. This guide walks the exact 3-day plan, the prompts that actually work, and the 4 things that kill most builds before they ship.

By 2026, “build vs buy” for AI SDR is no longer a debate. SaaS AI SDRs (AiSDR, Artisan, 11x) are wrappers around the same foundation models you can call directly. They charge $900-5,000/month for a UI, some templates, and managed infrastructure. The custom build path is now well-trodden: 72 hours, around $2,500 in build time, and you own the code forever.

This is the guide for the technical operator (founder, sales engineer, AI-curious dev) who wants to ship it. Step by step.

Day 0: Prerequisites you need before you start

Skip these and the build will collapse on day 4. Set them up first.

1. ICP definition that fits in one paragraph. If you cannot describe your ideal customer in 3 sentences (industry + size + buying trigger), the AI cannot either. Your AI SDR is only as targeted as the ICP you feed it. Spend 90 minutes writing this down before you write any code.

2. Sender domain infrastructure. You need at least 2-3 satellite domains, with SPF, DKIM, and DMARC properly configured, and a 2-week minimum warmup period using something like Smartlead or Instantly. Sending from your main domain (the one your real business runs on) will burn it. Burning your main domain is a 6-month recovery problem.

3. CRM with API access. HubSpot Free, Salesforce, Pipedrive, Close, Attio: any of these work. The agent needs to read ICP filters, write back contact records, and update deal stages. If your CRM is a spreadsheet, fix that first.

4. A small enrichment budget. Apollo, Clearbit, or Findymail for contact lookup. Budget around $50-200/month depending on volume. The AI cannot guess email addresses reliably.

If those four are not in place, building the agent is the wrong next step. Get those right first.

Day 1: Discovery and architecture (4-6 hours)

This is the day you do not write code. You map the system.

Define the agent loop

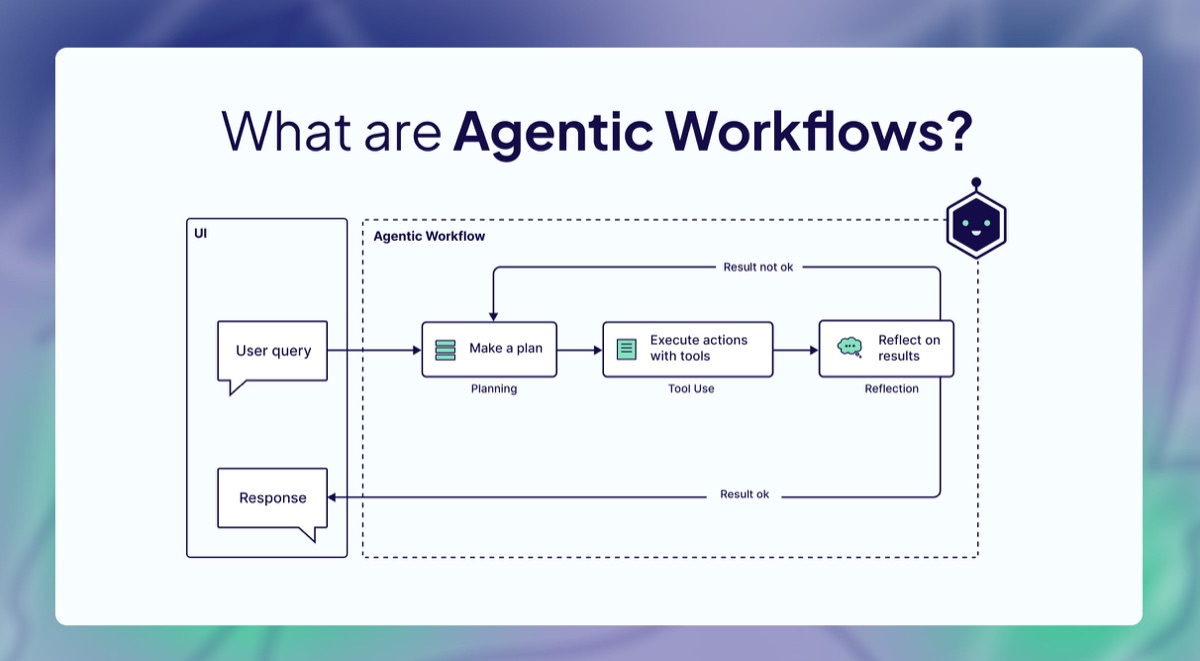

Every AI SDR agent runs the same 6-step loop. Map yours explicitly:

- Source prospects (from where, with what filters)

- Enrich prospect data (which fields, from which provider)

- Research each prospect (what signals, in what depth)

- Personalize the email (against what context)

- Send with sequencing (how many touches, what delays)

- Classify replies and route (interested, not now, unsubscribe)

For each step, decide: is this synchronous or background, what is the input, what is the output, what does failure look like.

Pick the stack

Stack that works in 2026 (battle-tested across multiple deployments):

- Claude Sonnet 4.6 or Opus 4.7 for research and drafting. Outperforms GPT on nuanced B2B messaging and is cheaper at the volume you will run.

- LangChain or a thin Python orchestrator for the loop. LangChain is fine if you know it; otherwise a 200-line Python script with

anthropicSDK is enough. - n8n for the plumbing: CRM webhooks, email sending, scheduled triggers, retry logic. Avoid hand-rolling this in code.

- Postgres or Supabase for the prospect state machine. Each prospect has a status: queued, researching, drafted, sent, replied, qualified.

- Smartlead or Instantly for actual sending. Their warm-up and rotation logic is mature; do not rebuild it.

- Apollo or Findymail for enrichment.

That is the whole stack. No vector DB, no embedding store, no fine-tuning. The model is good enough out of the box; the work is in the prompts and the orchestration.

Decide the sequence shape

A working 2026 sequence looks like:

- Day 0: Email 1, fully personalized, opens with a researched signal

- Day 3: Email 2, shorter, different angle

- Day 7: Email 3, breakup-style, “moving on if no response”

- Day 14: LinkedIn touch (manual or via Phantombuster/HeyReach)

Three emails is the new ceiling. Five-touch sequences burn deliverability without lifting reply rates.

Day 2: Build the research and drafting layer (8-10 hours)

This is the meat of the build. The two prompts you write here determine 80% of your reply rate.

The research prompt

The agent reads a prospect’s LinkedIn profile, their company website, and recent news, and produces a structured signal document. Example shape:

research_prompt = """

You are a B2B sales researcher. Given the prospect data below, produce

a JSON object with these fields:

- recent_signal: one specific thing happening at this company in the last 60 days

(funding, hire, product launch, layoff, expansion). Use the date.

- pain_point: the most likely operational pain given their stack and growth stage.

- buying_trigger: why they would care about our offer now, not in 6 months.

- conversation_hook: one sentence I could open a cold email with that proves

I read about them. Specific. No generic compliments.

Prospect data:

{prospect_data}

Return only valid JSON. No commentary.

"""Key points:

- Force structured output (JSON). Free-text outputs are inconsistent.

- Make every field about the prospect, not your offer. The model has to do the work of understanding their context.

- Include a date constraint on the signal. “Recent” is too vague.

The drafting prompt

Given the research output and your offer, draft the email. Example:

draft_prompt = """

You are writing a cold email from {sender_name} at {sender_company}.

Voice: direct, peer-to-peer, no fluff, no AI tells like "I noticed your team is hiring."

Length: under 90 words.

Offer: {offer_one_liner}

Research about the prospect:

{research_json}

Email structure:

1. Open with the conversation_hook. One sentence.

2. Connect the buying_trigger to the offer. One sentence.

3. Ask one specific question they can reply to with yes/no/maybe.

4. Sign off with first name only.

No subject line gimmicks. No "quick question." No "I hope this finds you well."

Output only the email body. Plain text.

"""Test this prompt on 20 prospects, read every output, edit the prompt until 18 of 20 emails are good enough to send unedited. That iteration loop takes 2-3 hours. It is the most leveraged time in the whole build.

Wire the research, drafting, and sending into a queue

The orchestration logic in pseudocode:

for prospect in icp_query():

research = claude(research_prompt, prospect)

draft = claude(draft_prompt, research, offer)

insert_into_smartlead(draft, prospect, sequence_id)

log_to_crm(prospect, status="queued")Run this against 50 prospects on Day 2 evening. Read every email manually. Tune the prompts. Do not send yet.

Day 3 morning: Reply classification and CRM sync (3-4 hours)

When replies come back, the agent has to read them and decide what to do.

classify_prompt = """

Classify this reply into exactly one category:

- interested: wants to talk, asked for a meeting, said yes

- objection: pushing back on price, timing, fit (treat as warm)

- not_now: too busy, ask in Q3, etc. (treat as nurture)

- unsubscribe: stop, remove me, etc. (treat as opt-out)

- bounce: out of office, no longer at company

Reply text: {reply_body}

Return only the category name.

"""Wire each category to a CRM action:

- interested → create deal, notify SDR on Slack, pause sequence

- objection → flag for human review

- not_now → tag for re-engagement in 90 days, pause sequence

- unsubscribe → add to global suppression, pause all sequences

- bounce → delete contact

This is where most builds get sloppy. The classifier is right 92-95% of the time, which means 5-8% of replies get mishandled. Build a Slack alert for any reply that the classifier scores as low-confidence so a human can break the tie.

Day 3 afternoon: Deploy, dry-run, and tune (3-4 hours)

Run the agent end-to-end on 50-100 prospects with sending paused. Read every email. Score each one: would I send this? If yes-rate is below 90%, the prompts need another pass.

When 90%+ are sendable, flip sending on, but cap at 50 emails on Day 1. Watch the reply rate, deliverability metrics, and bounce rate. Cap at 100/day for the first week. Scale only when sender reputation is clearly stable.

That is the whole build. Three days, one focused human, around $80 of API usage to test on 200 prospects.

The 4 pitfalls that kill AI SDR builds

These are the patterns I have watched kill builds across multiple client deployments. Avoid them.

1. Treating the prompts as set-and-forget. Prompts decay. The market changes, your offer evolves, prospects develop new tells. Re-tune the prompts every 4-6 weeks against fresh data. Most builds that “stop working” are actually prompts that drifted.

2. Sending without a human in the queue. Even if the queue review takes 30 seconds per email, do it for the first 2-3 weeks. You will catch the AI tells, the wrong context, the sequence that is burning a domain. After week 3, the prompt is dialed and you can move to spot-checking.

3. Building enrichment from scratch. The “I will scrape LinkedIn myself” trap. LinkedIn breaks scrapers every 60 days. Pay Apollo or Findymail. The cost is trivial compared to the engineering time.

4. No state machine. Without an explicit prospect state field (queued, researching, sent, replied, qualified), you will end up with double sends, missed follow-ups, and a CRM that does not match reality. Spend an hour on the schema before you write the loop.

What this looks like running

A working 2026 setup, one human + one AI SDR agent:

- 50-150 personalized touches per day per sender domain

- 4-8% reply rate (vs 1-2% for templated SaaS sends)

- 1-3 qualified meetings per day at full volume

- 30 minutes per day of human queue review

- Total monthly cost: $50-150 in API + $80-200 in enrichment + $30-100 in sending infra

Compare that to a SaaS AI SDR at $900-5,000/month with worse personalization and zero ownership of the prompts or data.

When NOT to build it yourself

To be straight with you. This guide assumes you (or someone on your team) can ship 1500 lines of Python and orchestration in 72 hours. If that is not the case, the build will sprawl into 2-4 weeks and the math changes.

If you do not have the technical capacity in-house, a custom build done by an outside agency in 72 hours, owned by you, is still a better deal than 12 months of SaaS payments. That is exactly the work I do at The AI Pipe.

Get a Free AI Audit and we will scope your specific AI SDR build in 72 hours.

Related reading

- Fully Autonomous AI SDRs Are Failing in 2026: the data behind the industry reset and why hybrid human+AI wins.

- Best AI SDR Tools 2026: 7 SaaS Platforms vs Custom Build: honest comparison if you are still deciding between building and buying.

- The True Cost of AI SDRs: SaaS vs Custom: 2-year cost breakdown showing $19K-117K savings from custom builds.